Edge computing significantly reduces streaming latency by processing data closer to the end user, allowing for faster response times and improved user experiences. This technology shifts the data processing from centralized servers to local edge servers, which drastically enhances the speed at which content is delivered. In this article, we will explore how edge computing achieves this, the technologies involved, and its impact on streaming services.

Understanding Streaming Latency

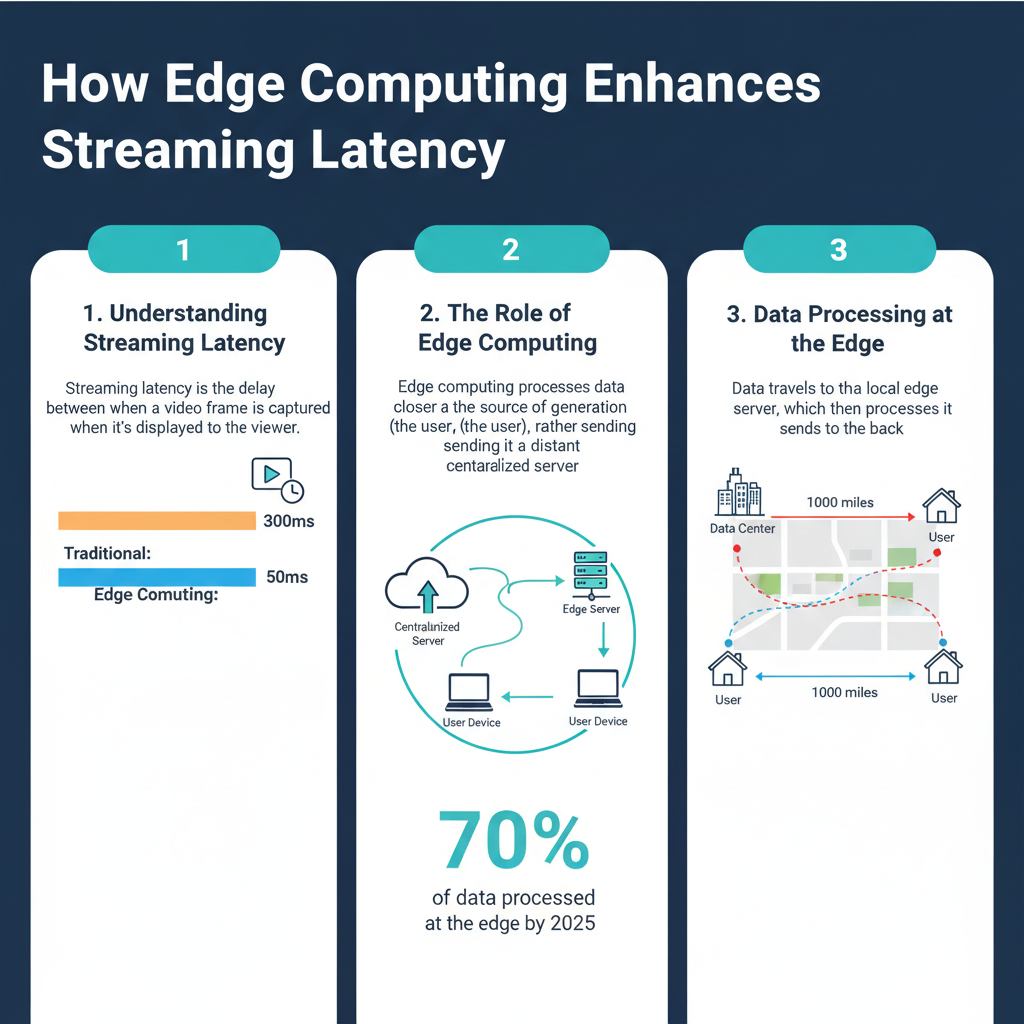

Streaming latency refers to the delay that occurs between the time content is requested by a user and when it begins to play. This delay is critical because it directly affects user satisfaction; high latency can lead to buffering, poor video quality, and ultimately, user frustration. For streaming services, maintaining low latency is essential for delivering real-time experiences, particularly during live events like sports broadcasts and online gaming.

Common causes of high latency in streaming services include network congestion, long-distance data transmission, and inefficient data processing methods. Each of these factors can contribute to delays that negatively impact how quickly users can access and enjoy their content. Understanding these latency factors is crucial for identifying solutions, such as edge computing.

The Role of Edge Computing

Edge computing is a distributed computing paradigm that brings computation and data storage closer to the location where it is needed. The key principles of edge computing involve reducing the distance data must travel and processing it near the source. This approach not only improves speed but also reduces bandwidth usage and enhances privacy and security.

Unlike traditional cloud computing, which relies on centralized data centers that can be hundreds or even thousands of miles away from the end user, edge computing distributes resources in a way that allows for immediate access. This difference plays a significant role in reducing latency, as data can be processed and delivered in real-time, rather than waiting for it to travel back and forth to a central server.

Data Processing at the Edge

The location of data processing is central to understanding latency. In traditional systems, data is sent to a central server for processing before being sent back to the user. This round-trip can introduce significant delays, especially for users located far from the data center. Edge computing, on the other hand, localizes data processing by utilizing edge servers that are much closer to the end user.

The benefits of localized data processing are substantial. Not only does it drastically reduce latency, but it also allows for real-time analytics and decision-making, which can enhance the user experience. For instance, in a live sports streaming application, instant replays and real-time statistics can be delivered seamlessly, providing viewers with an engaging experience that keeps them glued to their screens.

Content Delivery Networks (CDNs) and Edge Computing

Content Delivery Networks (CDNs) are an integral part of the streaming landscape, and they leverage edge computing to optimize content delivery. CDNs consist of distributed networks of servers that cache content closer to users, reducing latency and improving load times. By combining CDN capabilities with edge computing, streaming services can achieve unprecedented levels of performance.

Many popular CDNs, such as Akamai and Cloudflare, have implemented edge technologies to enhance streaming. These services strategically place servers in various geographical locations, allowing for content to be served from the nearest edge server. For example, when a user in Europe streams a video from a US-based platform, the CDN ensures that the content is delivered from a server in Europe, minimizing latency and buffering times.

Real-World Applications and Case Studies

Numerous streaming platforms have successfully implemented edge computing to improve latency and overall performance. One notable case study is that of Netflix, which has invested heavily in edge computing through its Open Connect program. By partnering with ISPs and deploying its own caching servers, Netflix can deliver content more efficiently, significantly reducing buffering times for users around the world.

User feedback has shown that after implementing edge computing solutions, viewers experience fewer interruptions and smoother playback. Performance metrics reveal that Netflix has seen a marked decrease in latency, particularly during peak viewing times, thanks to its edge-enhanced infrastructure.

Another example is Twitch, the leading live-streaming platform for gamers, which utilizes edge computing to ensure that live broadcasts are delivered without lag. This real-time processing is vital for maintaining viewer engagement during intense gaming competitions, demonstrating just how crucial edge computing is for streaming services.

Future Trends in Edge Computing and Streaming

The future of edge computing in streaming services looks promising, with several trends on the horizon. One prediction is the integration of artificial intelligence (AI) and machine learning (ML) at the edge, which could further optimize content delivery and personalize user experiences. For example, AI algorithms could analyze viewer behavior in real-time to recommend content that is likely to be of interest, all while reducing latency.

Emerging technologies, such as 5G networks, will also play a significant role in the evolution of edge computing. With faster network speeds and lower latency, 5G will enable more robust edge computing applications, paving the way for innovative streaming experiences like augmented reality (AR) and virtual reality (VR) content delivery.

Challenges and Considerations

While the benefits of edge computing are clear, there are also challenges to consider. Implementing edge computing solutions can be complex and costly, particularly for smaller streaming services. Businesses must carefully assess their infrastructure needs and determine the most effective balance between edge and cloud strategies.

Additionally, security concerns may arise with decentralized data processing. Ensuring that user data remains secure at the edge is essential, requiring robust encryption and compliance with data protection regulations. Streaming services must remain vigilant in addressing these challenges to fully capitalize on the advantages of edge computing.

In summary, edge computing is transforming the landscape of streaming latency by enabling faster, localized data processing and efficient content delivery. By reducing latency and enhancing user experiences, streaming services can meet the demands of today’s consumers. As technology continues to evolve, staying informed about edge computing solutions and their implications will be vital for businesses aiming to enhance their streaming offerings. So, whether you’re a developer, a service provider, or a user, consider the impact of edge computing on your streaming experience and explore how it can lead to improved performance and satisfaction.

Frequently Asked Questions

What is edge computing and how does it relate to streaming latency?

Edge computing refers to the practice of processing data closer to the source of data generation rather than relying on a centralized data center. This proximity significantly reduces the time it takes to transmit data, resulting in lower streaming latency. By deploying edge servers near users, content delivery can be faster and more efficient, leading to a smoother streaming experience with minimal delays.

How does edge computing improve the quality of video streaming?

Edge computing enhances the quality of video streaming by reducing buffering and lag times, which are often caused by high latency. With data processed at the edge, video content can be cached locally, allowing for quicker access and a seamless playback experience. This means viewers can enjoy high-definition content without interruptions, even during peak usage times.

Why is low latency important for live streaming applications?

Low latency is crucial for live streaming applications because it ensures real-time interaction between broadcasters and viewers. High latency can disrupt the viewer experience, leading to delayed responses and a disconnect during events like sports or live Q&A sessions. By leveraging edge computing, providers can deliver content with minimal delay, enhancing viewer engagement and satisfaction.

What are the best practices for implementing edge computing to reduce streaming latency?

To effectively implement edge computing and reduce streaming latency, providers should strategically place edge servers in locations that are close to their user base. Additionally, optimizing content delivery networks (CDNs) for edge computing can help in caching popular content, thereby reducing load times. Regularly monitoring performance metrics and user feedback can also guide adjustments to improve service quality.

Which industries benefit the most from edge computing in streaming applications?

Several industries benefit significantly from edge computing in streaming applications, particularly gaming, entertainment, and telecommunication. In gaming, reduced latency can enhance real-time interactions, while in entertainment, it can provide smoother viewing experiences for live events. Telecommunications companies utilize edge computing to deliver high-quality video calls and streaming services, making it a vital technology across various sectors.

References

- Edge computing

- https://www.nist.gov/news-events/news/2020/06/how-edge-computing-can-improve-data-processing

- https://www.sciencedirect.com/science/article/pii/S1386372319301780

- https://www.techrepublic.com/article/how-edge-computing-is-changing-the-way-we-stream-video/

- https://www.forbes.com/sites/bernardmarr/2021/02/15/the-importance-of-edge-computing-for-video-streaming/

- https://www.bbc.com/news/technology-55798914

- https://www.cio.com/article/353225/how-edge-computing-optimizes-data-streaming.html