AI detects deepfake videos on streaming platforms by utilizing sophisticated algorithms and machine learning techniques to analyze video content for inconsistencies. These technologies are pivotal in identifying manipulated media, thereby protecting viewers from misinformation and preserving the integrity of digital content. In this article, we will delve into the intricacies of deepfake technology, the role of AI in detection, the challenges faced, and the importance of employing these advanced systems to foster a trustworthy viewing experience.

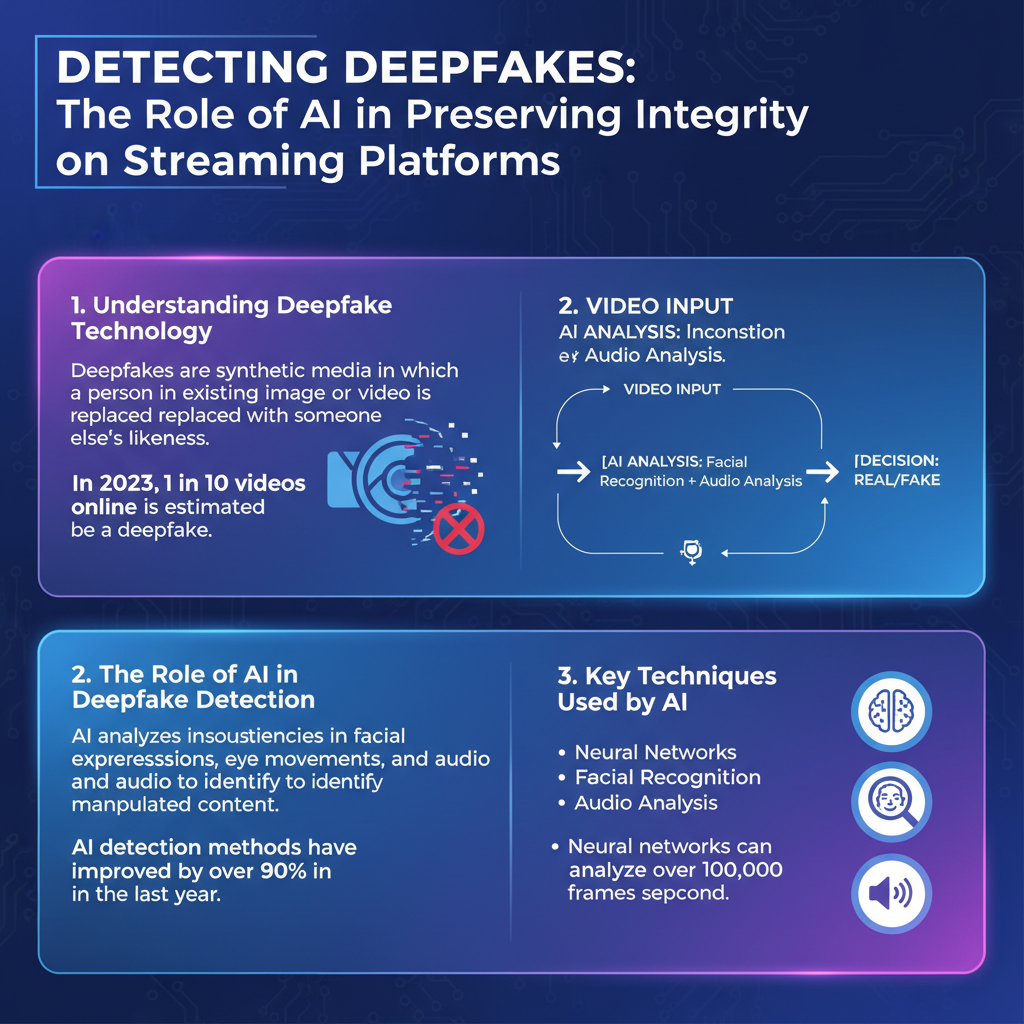

Understanding Deepfake Technology

Deepfake technology refers to the manipulation of video or audio content where an individual’s likeness or voice is replaced with that of another person using artificial intelligence. This is typically achieved through techniques such as generative adversarial networks (GANs), which involve two neural networks competing against each other: one generates the fake content while the other attempts to detect it. This cat-and-mouse game allows for increasingly convincing deepfakes.

While deepfake technology has garnered a notorious reputation for its potential misuse—such as creating false news videos or revenge porn—it also has positive applications. For instance, in the film industry, deepfakes can be used for de-aging actors or creating realistic visual effects without the need for extensive makeup or CGI. Furthermore, organizations have utilized this technology for educational purposes, such as creating immersive experiences in training simulations or historical reenactments. However, the line between beneficial and harmful uses remains precarious, necessitating robust detection methods.

The Role of AI in Deepfake Detection

AI plays a central role in detecting deepfakes by analyzing video frames for subtle anomalies that may indicate manipulation. This analysis is not just about looking for obvious discrepancies; it involves a deep dive into the minutiae of video data. For example, AI can detect inconsistencies in facial movements, blinking patterns, and even the nuances of light reflection on skin.

Machine learning models are trained on extensive datasets that include both authentic and manipulated videos. By exposing these models to a wide variety of content, they learn to identify patterns and features that distinguish real videos from those that have been altered. As a result, AI can flag suspicious content for further review, significantly speeding up the detection process compared to human analysis alone.

Key Techniques Used by AI

AI employs several key techniques in its arsenal for deepfake detection, with feature extraction being one of the most critical. This process involves identifying specific elements within the video that may highlight alterations. For example, AI can analyze facial landmarks, examining how they move in relation to one another. If a face appears unnatural—such as having inconsistent eye movements or abnormal lip-syncing—it may trigger an alert.

Another powerful tool in the AI toolkit is the use of neural networks, particularly deep learning models. These networks can learn hierarchical representations of data, enabling them to differentiate between real and fake content with remarkable accuracy. For instance, researchers have developed convolutional neural networks (CNNs) that have successfully identified deepfakes with high precision by focusing on the pixel-level differences that might go unnoticed by the human eye. Such advanced techniques are vital in the ongoing battle against increasingly sophisticated deepfake technology.

Challenges in Detecting Deepfakes

Despite the advancements in AI, detecting deepfakes presents several challenges. One major issue is the rapid evolution of deepfake technology itself. As creators of deepfakes become more adept at manipulating videos, they also learn to evade detection methods. This means that detection algorithms must constantly evolve to keep up with new techniques used in deepfake generation.

Additionally, the variability in the quality and style of deepfakes complicates identification. Some deepfakes are crudely made with noticeable artifacts, while others are so well-crafted that even experts may struggle to distinguish them from real footage. This inconsistency in quality means that detection systems must be robust enough to handle a wide spectrum of potential manipulations, making it a daunting task for AI developers.

The Importance of AI Detection on Streaming Platforms

Implementing AI detection technologies on streaming platforms is crucial for several reasons. First and foremost, these systems protect viewers from misinformation, which can lead to serious repercussions in the real world. For instance, deepfake videos could be used to manipulate public opinion during elections or to create false narratives about individuals, damaging reputations and leading to societal unrest.

Moreover, maintaining the integrity of content is essential for the trustworthiness of streaming platforms. Users are more likely to engage with services that they believe prioritize authenticity. By incorporating AI detection systems, platforms can reassure their audiences that they are taking proactive measures to combat the spread of deceptive media. This not only enhances user experience but also fosters a healthier online environment.

Future Developments in AI Deepfake Detection

Looking ahead, the future of AI deepfake detection holds exciting possibilities. As technology continues to advance, we can expect significant improvements in detection algorithms. For instance, the integration of more sophisticated machine learning techniques, including unsupervised learning, may allow AI systems to identify deepfakes without relying on large labeled datasets. This could enhance their adaptability and effectiveness.

Collaboration between tech companies and regulatory bodies will also play a crucial role in developing these technologies. By working together, stakeholders can establish standards and best practices for deepfake detection, making it easier for streaming platforms to implement effective solutions. Furthermore, public awareness and educational initiatives about deepfakes can empower viewers to be more discerning when consuming media.

Summarizing the key points discussed, it is evident that AI plays a crucial role in identifying deepfake videos on streaming platforms. As technology continues to evolve, staying informed about these detection methods is essential. Readers are encouraged to follow advancements in AI and support platforms that prioritize video integrity. By doing so, we can collectively foster a safer and more trustworthy digital landscape.

Frequently Asked Questions

How does AI detect deepfake videos on streaming platforms?

AI detects deepfake videos by utilizing advanced algorithms and machine learning models that analyze video content for inconsistencies. These models examine facial movements, audio-visual synchronization, and even micro-expressions to identify alterations that indicate manipulation. By training on large datasets of genuine and fake videos, AI can learn the subtle differences and flag suspicious content for further review.

Why is it important for streaming platforms to identify deepfake videos?

It is crucial for streaming platforms to identify deepfake videos to maintain the integrity of their content and protect viewers from misinformation and potential harm. Deepfakes can be used to spread false narratives, manipulate public opinion, or even defame individuals. By leveraging AI to detect these videos, platforms can foster a safer viewing environment and uphold trust among their user base.

What are the common techniques used by AI to differentiate real videos from deepfakes?

Common techniques used by AI to differentiate real videos from deepfakes include facial recognition technology, deep learning analysis, and digital forensics. These methods focus on identifying anomalies in pixel patterns, inconsistencies in lighting, and unnatural facial movements. Additionally, AI may analyze audio cues to detect mismatches between the voice and the visual representation, enhancing the overall detection capabilities.

Which streaming platforms are actively using AI to combat deepfake videos?

Many leading streaming platforms, such as YouTube, Facebook, and TikTok, are actively employing AI technologies to combat deepfake videos. These platforms utilize a combination of automated moderation tools and user-reporting systems to identify and remove deceptive content quickly. Additionally, partnerships with AI companies specializing in deepfake detection are becoming increasingly common to enhance their capabilities in this area.

What can users do to protect themselves from deepfake content on streaming platforms?

Users can protect themselves from deepfake content by being vigilant and critical of the videos they encounter online. It’s essential to verify the source of the content, check for any discrepancies in visuals or audio, and look for credible fact-checking resources when in doubt. Additionally, using platforms that prioritize AI detection and user education regarding deepfakes can significantly reduce the likelihood of being misled by manipulated content.

References

- Deepfake

- https://www.reuters.com/technology/deepfakes-how-technology-works-2021-02-19/

- https://www.bbc.com/news/technology-51437478

- https://www.nytimes.com/2020/12/26/technology/deepfakes.html

- https://www.sciencedirect.com/science/article/pii/S1361841521000667

- https://www.wired.com/story/deepfake-detection-ai/

- https://www.nist.gov/news-events/news/2020/12/nist-releases-new-data-deepfake-detection-tools

- https://www.ojp.gov/pdffiles1/nij/254468.pdf