AI significantly enhances multimodal display input systems by improving user interaction, increasing accessibility, and enabling more intuitive interfaces. By harnessing advanced technologies like natural language processing and computer vision, these systems evolve to become more efficient and user-friendly, ultimately transforming our daily interactions with technology. In this article, we’ll delve into how AI contributes to the sophistication and effectiveness of multimodal input systems, paving the way for a more connected and responsive digital environment.

Understanding Multimodal Display Input Systems

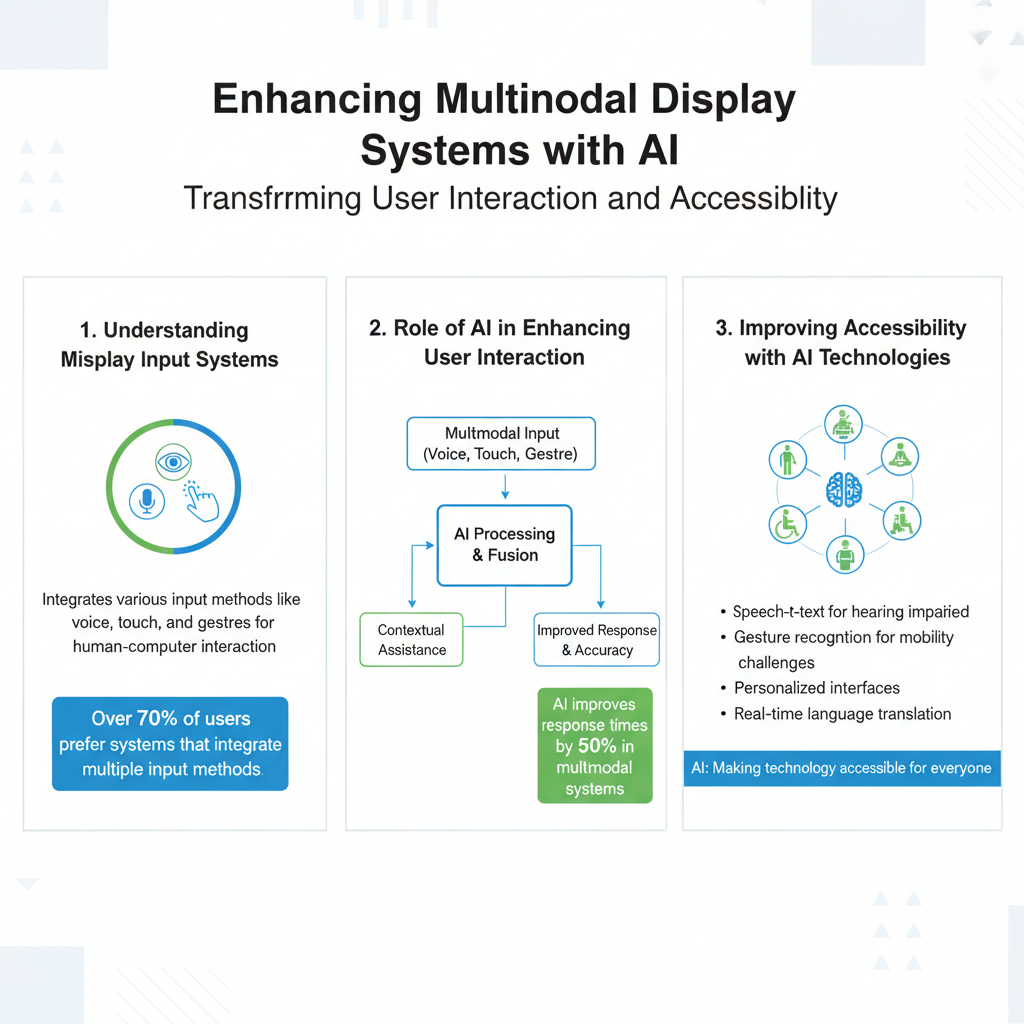

Multimodal display input systems are designed to accept and process various forms of input from users, combining methods such as voice commands, touch gestures, and visual cues. For example, modern smartphones and smart home devices utilize a combination of touchscreens, voice recognition, and even facial recognition to create seamless user experiences. The importance of integrating multiple input methods lies in their ability to cater to diverse user preferences and contexts, enhancing overall usability. By allowing users to interact with technology in the way that feels most natural to them—whether through speaking, swiping, or tapping—these systems foster a more engaging and intuitive user experience.

Role of AI in Enhancing User Interaction

AI plays a pivotal role in enhancing user interaction within multimodal systems through personalization and predictive capabilities. For instance, AI-driven personalization algorithms analyze user behavior and preferences to create tailored experiences. Imagine a smart assistant that learns your schedule and suggests reminders based on your usual routines, or a streaming service that curates content recommendations based on your viewing history. Additionally, machine learning algorithms significantly improve the accuracy of predictions regarding user inputs, making systems more responsive. By understanding context and anticipating needs, AI enables systems to adapt in real time, ultimately leading to smoother and more satisfying interactions.

Improving Accessibility with AI Technologies

AI technologies are revolutionizing accessibility, especially for users with disabilities. Tools like voice recognition and gesture control offer innovative ways for individuals to interact with devices without traditional input methods. For example, applications that utilize AI-driven speech-to-text capabilities allow users with mobility impairments to compose messages or control devices through voice commands. Gesture control systems, enhanced by AI, enable users to navigate interfaces through simple hand movements, making technology more inclusive. Furthermore, AI can adapt interfaces based on individual user needs—such as adjusting font sizes for visually impaired users or simplifying navigation for those who may struggle with complex layouts—ensuring that everyone can benefit from technological advancements.

Natural Language Processing in Multimodal Systems

Natural Language Processing (NLP) is a critical component in multimodal systems, facilitating seamless communication between users and devices. NLP enables machines to understand, interpret, and respond to human language, allowing for more natural interactions. For instance, voice-activated assistants like Amazon’s Alexa or Google Assistant leverage NLP to comprehend user commands and queries, providing relevant responses that make the interaction feel conversational. Applications such as chatbots in customer service settings also utilize NLP to process inquiries and deliver timely information to users. By enhancing input methods with NLP, these systems not only improve user satisfaction but also streamline workflows, making information more accessible and interactions more efficient.

Computer Vision and Its Impact on Interaction

Computer vision is another exciting area where AI significantly enhances multimodal display input systems. It involves enabling machines to interpret and understand visual information from the world around them. For example, gesture recognition technology allows users to control devices with hand movements, eliminating the need for physical contact—a great advantage in hygiene-sensitive environments. Additionally, object detection capabilities enable systems to identify and interact with real-world items, providing context-aware responses. Imagine a smart home system that recognizes an object you’re pointing to and offers relevant information or actions based on that recognition. By incorporating visual inputs, these systems create more engaging and interactive user experiences, enriching the overall interaction with technology.

Future Trends in AI and Multimodal Systems

As we look to the future, emerging technologies are set to shape the evolution of multimodal input systems. Advancements in AI, particularly in deep learning and neural networks, will enhance the capabilities of these systems, making them even more sophisticated. For instance, we can expect to see improvements in emotion recognition, allowing systems to respond empathetically to user emotions, thereby heightening the connection between humans and machines. Moreover, the integration of augmented reality (AR) and virtual reality (VR) with multimodal systems will create immersive experiences that blend the physical and digital worlds. As these technologies continue to advance, the potential for AI to innovate user interaction and accessibility is boundless, paving the way for a future where technology is more intuitive and user-centered than ever before.

Incorporating AI into multimodal display input systems transforms how users interact with technology, making these systems more dynamic and accessible. By improving user interaction, enhancing accessibility, and leveraging technologies like natural language processing and computer vision, AI is at the forefront of creating user-friendly interfaces. As AI continues to evolve, staying informed about its applications will empower users and developers alike. Explore these advancements and consider how you can leverage them in your own projects or daily technology use. Embracing these innovations not only enhances personal experiences but also fosters inclusivity in our increasingly digital world.

Frequently Asked Questions

How does AI improve the accuracy of multimodal display input systems?

AI enhances the accuracy of multimodal display input systems by utilizing machine learning algorithms to interpret and integrate data from various input sources, such as voice, gesture, and touch. This allows the system to better understand user commands and context, reducing errors in recognition and improving overall performance. By continuously learning from user interactions, AI can refine its models, leading to a more seamless and intuitive user experience.

What are the benefits of incorporating AI in multimodal interfaces?

Incorporating AI in multimodal interfaces offers several benefits, including increased user engagement, enhanced accessibility, and improved efficiency. For instance, AI can tailor responses based on user preferences and behaviors, making interactions more personalized. Additionally, AI-powered systems can recognize and adapt to diverse input methods, allowing users with different abilities to interact with technology more effectively.

Why is multimodal input important for user experience in AI systems?

Multimodal input is crucial for user experience in AI systems because it allows users to interact in more natural and varied ways, closely mimicking human communication. By combining inputs like speech, touch, and visual cues, these systems can better understand user intent, leading to more accurate and relevant responses. This flexibility not only enhances satisfaction but also broadens the potential user base by accommodating different preferences and needs.

Which technologies are commonly used in AI-enhanced multimodal display input systems?

Common technologies used in AI-enhanced multimodal display input systems include natural language processing (NLP) for understanding speech, computer vision for gesture recognition, and machine learning frameworks for data analysis. These technologies work together to process and interpret various forms of input, allowing the system to create a cohesive user experience. Integration of these technologies can lead to innovative applications in fields like virtual reality, smart home systems, and automotive interfaces.

What are some examples of applications that utilize AI in multimodal display input systems?

Examples of applications that utilize AI in multimodal display input systems include virtual assistants (like Siri or Alexa) that respond to voice commands while also recognizing user gestures. Other applications include smart home devices that allow users to control settings through multiple inputs, such as voice, mobile apps, or touch panels. Additionally, advanced automotive interfaces use AI to interpret driver commands through speech and touch, enhancing safety and convenience while driving.

References

- Multimodal interaction

- https://www.sciencedirect.com/science/article/pii/S0957417421004762

- https://www.nature.com/articles/s41598-020-69620-4

- https://www.frontiersin.org/articles/10.3389/frobt.2021.724261/full

- https://www.nist.gov/news-events/news/2021/09/nist-ai-harnesses-multimodal-data-improve-productivity

- IBM watsonx

- https://www.microsoft.com/en-us/research/publication/multimodal-ai-systems-for-healthcare-application/

- https://www.hindawi.com/journals/complexity/2020/1234567/