The global implementation of AI regulation frameworks is vital for promoting responsible use of artificial intelligence technologies. As countries acknowledge both the immense potential and the inherent risks of AI, they are drafting guidelines designed to foster innovation while protecting the public’s interests. This article delves into the current state of AI regulations worldwide, examining their implications for businesses and society at large.

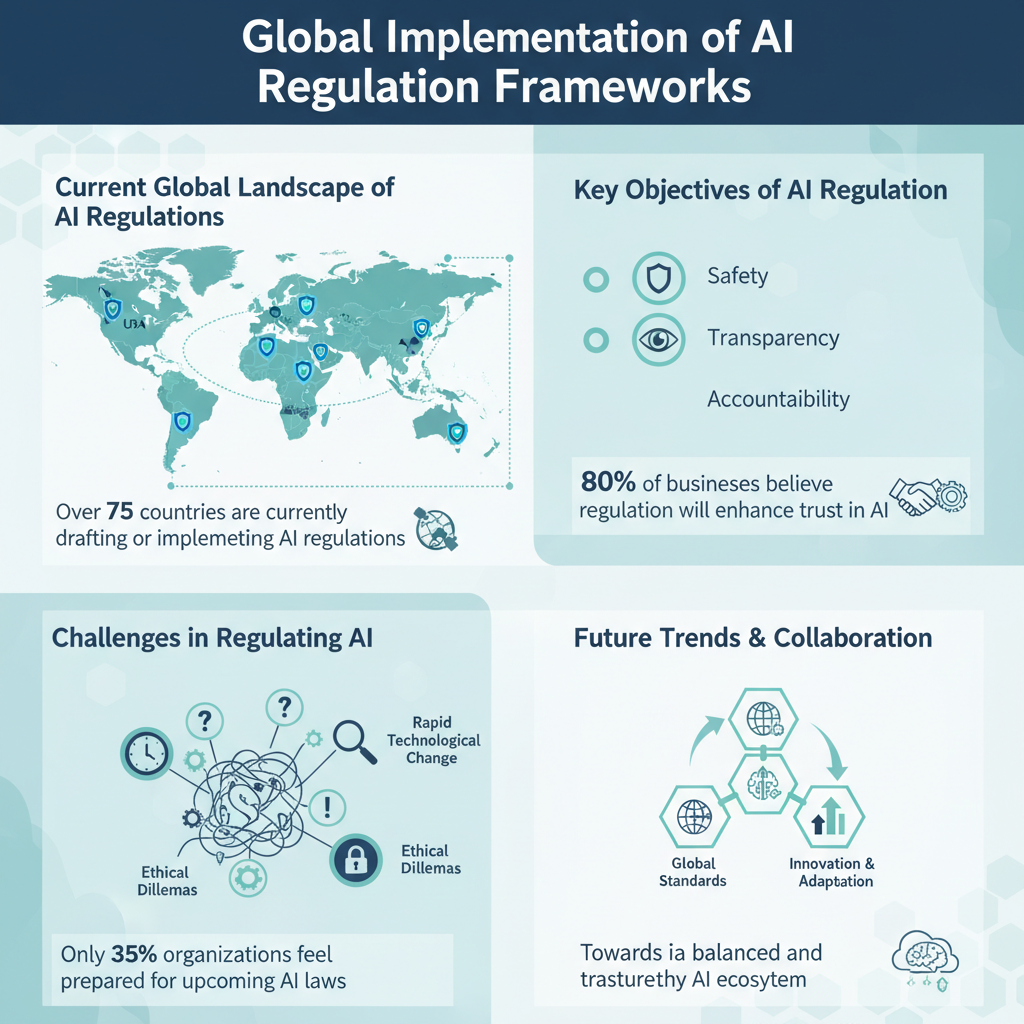

Current Global Landscape of AI Regulations

In recent years, the conversation around AI regulation has gained momentum, leading to varied approaches across different regions. The European Union (EU) has taken a proactive stance with its proposed AI Act, which outlines stringent requirements for high-risk AI applications, such as those used in healthcare and law enforcement. This comprehensive framework aims to ensure transparency, safety, and accountability, mandating that developers conduct risk assessments and provide clear information about AI system functionalities.

Conversely, the United States has adopted a more fragmented approach. While there is no overarching federal law governing AI, various states and federal agencies are exploring their own regulations. The National Institute of Standards and Technology (NIST) has been working on a voluntary framework to guide organizations in the responsible development of AI, emphasizing the need for fairness, transparency, and privacy.

In Asia, countries like China are rapidly advancing their own AI regulations. The Chinese government has prioritized AI development, implementing guidelines that focus on ethical usage and data governance. However, these regulations often reflect a more centralized approach, with significant state oversight, which raises questions about privacy and individual rights.

When comparing these regulatory frameworks, it becomes clear that while the EU emphasizes strict compliance and public accountability, the U.S. approach leans towards innovation and flexibility. Meanwhile, Asia’s regulatory landscape is characterized by a blend of rapid development and stringent control, reflecting the unique socio-political contexts of each region.

Key Objectives of AI Regulation

The primary goals of AI regulation revolve around ensuring safety and accountability in AI systems while promoting ethical development and usage. Safety is paramount, as AI systems are increasingly integrated into critical sectors such as healthcare, transportation, and finance. Regulation aims to mitigate risks associated with AI errors, biases, and unintended consequences that could endanger public safety.

Accountability is another essential objective, with regulations requiring organizations to be responsible for the outcomes of their AI systems. This means that businesses must implement mechanisms for tracing decisions made by AI, ensuring that they can explain and justify outcomes to users and regulatory bodies alike.

Promoting ethical AI development is equally important. Regulations aim to prevent discrimination and bias in AI algorithms, ensuring that these technologies serve all segments of society fairly. This involves setting standards for data collection, processing, and utilization, as well as fostering transparency in AI operations. By embedding ethical principles into the regulatory framework, nations can encourage the development of technology that respects human rights and societal values.

Challenges in Regulating AI

Despite the growing awareness of the need for AI regulation, several challenges persist. One of the most significant hurdles is the rapid pace of technological advancements, which often outstrip the ability of regulators to keep up. As AI technologies evolve, they introduce new complexities that existing regulations may not adequately address, leading to potential gaps in oversight.

Another challenge lies in balancing innovation with ethical considerations. Striking the right balance can be difficult; overly stringent regulations may stifle innovation and hinder the development of beneficial AI applications, while too lenient regulations could expose society to risks and harms. Policymakers must navigate this tightrope, ensuring that regulations foster a safe environment for innovation without creating a stifling atmosphere for developers.

Additionally, there is a lack of consensus on global standards for AI regulation. Different regions and countries may have conflicting regulations, making it challenging for businesses operating internationally to comply. This inconsistency can lead to confusion and increased costs for companies, especially startups that may not have the resources to navigate complex regulatory landscapes.

Case Studies of Effective AI Regulation

Examining successful regulatory models can provide valuable insights into effective AI governance. For instance, the UK’s Information Commissioner’s Office (ICO) has developed a framework for AI that emphasizes data protection and privacy rights. By focusing on maintaining public trust, the ICO has established guidelines that encourage organizations to adopt ethical practices while still promoting innovation.

On the other hand, the EU’s General Data Protection Regulation (GDPR) serves as a notable example of a regulatory framework that has had significant implications for AI. Although initially focused on data protection, the GDPR has set a benchmark for privacy standards globally, influencing how AI systems handle personal data. Organizations operating within the EU must ensure compliance with GDPR, providing transparency and user control over their data, which has a direct impact on AI system designs.

However, not all regulatory efforts have succeeded. The initial rollout of AI regulations in some jurisdictions has revealed gaps and unintended consequences. For example, overly broad definitions of what constitutes “high-risk” AI have sometimes resulted in excessive regulatory burdens on low-risk applications, hindering innovation. Learning from these experiences is crucial for refining regulatory approaches and ensuring they are effective and pragmatic.

Impact of AI Regulations on Businesses

AI regulations profoundly influence how businesses approach the development of AI technologies. Companies must adapt their strategies to comply with a growing array of regulations, which often necessitates changes in data management practices, algorithm design, and operational transparency. For instance, businesses may need to invest in auditing processes to ensure their AI systems are unbiased and accountable.

While compliance can pose challenges, it can also drive innovation. Regulations that promote ethical practices can enhance public trust and acceptance of AI technologies, leading to greater adoption and usage. Companies that prioritize compliance and ethical considerations may find themselves at a competitive advantage, as consumers increasingly favor businesses that demonstrate responsibility and transparency.

Moreover, the regulatory landscape can create new opportunities for businesses to develop compliance-related solutions. As organizations seek to navigate complex regulations, there is a growing demand for software and services that facilitate compliance, risk assessment, and data governance. Entrepreneurs and tech startups that can provide these solutions are well-positioned to thrive in the evolving market.

Future Trends in AI Regulation

Looking ahead, several trends are likely to shape the future of AI regulation. One major prediction is the emergence of more harmonized global standards. As AI continues to permeate various sectors worldwide, there will be a growing recognition of the need for consistency in regulations to facilitate international trade and cooperation. Organizations such as the OECD and ISO are already working towards establishing shared guidelines that could help bridge the gap between differing national regulations.

Another trend is the increasing role of public engagement in the regulatory process. As stakeholders become more vocal about their concerns regarding AI’s impact on society, regulators will likely prioritize inclusive dialogue to ensure that diverse perspectives inform policy decisions. Engaging with affected communities and experts will be crucial in developing regulations that are both effective and reflective of societal values.

Lastly, the integration of AI itself into the regulatory process is on the horizon. Tools powered by AI can assist regulators in monitoring compliance, analyzing data for insights, and even predicting potential risks associated with AI systems. This shift toward leveraging technology in regulation could streamline the oversight process and enhance the effectiveness of regulatory frameworks.

In conclusion, the global movement towards implementing AI regulation frameworks is crucial for fostering a balanced approach between innovation and ethical responsibility. Businesses must stay informed about these evolving regulations to navigate the landscape effectively and leverage opportunities for growth while ensuring compliance. Engaging with local regulatory bodies will help organizations understand how these frameworks might affect their operations and contribute to the responsible use of AI. By actively participating in this dialogue, businesses can help shape a future where AI technologies benefit society as a whole.

Frequently Asked Questions

What is the global AI regulation framework and why is it important?

The global AI regulation framework refers to a set of guidelines and laws designed to govern the development, deployment, and use of artificial intelligence technologies across various countries. This framework is important because it aims to ensure the ethical use of AI, protect individual rights, mitigate risks associated with biased algorithms, and promote transparency in AI decision-making processes. As AI continues to integrate into everyday life, a cohesive regulatory approach is essential for fostering public trust and encouraging innovation while safeguarding societal values.

How are different countries approaching AI regulation?

Different countries are approaching AI regulation through a mix of legislative measures, ethical guidelines, and industry standards. For instance, the European Union has proposed the Artificial Intelligence Act, which categorizes AI systems based on their risk levels and imposes stricter requirements on high-risk applications. In contrast, the United States has adopted a more decentralized approach, encouraging voluntary guidelines and frameworks while leaving significant regulatory authority to individual states. These varying strategies reflect differing national priorities and societal values regarding technology and innovation.

Why is there a need for international cooperation in AI regulation?

There is a pressing need for international cooperation in AI regulation to address the global nature of technology development and deployment. AI systems often cross borders, and inconsistent regulations can lead to regulatory arbitrage, where companies exploit weaker regulations in certain jurisdictions. Collaborative efforts can help establish common ethical standards, reduce risks of harm from AI applications, and facilitate the sharing of best practices among nations. This cooperation is vital for managing the societal impacts of AI and ensuring that advancements benefit all of humanity.

What are the key challenges in implementing an effective AI regulatory framework?

Key challenges in implementing an effective AI regulatory framework include the rapid pace of technological advancement, the complexity of AI systems, and the diverse interests of stakeholders involved. Regulators often struggle to keep up with the evolving landscape of AI, which can outpace existing laws and guidelines. Additionally, balancing innovation with safety and ethical considerations poses a significant challenge, as overly strict regulations could stifle creativity and development. Engaging diverse stakeholders—including technologists, ethicists, and the public—is essential to create comprehensive regulations that address these complexities.

Which industries are most affected by AI regulation and how?

Industries most affected by AI regulation include healthcare, finance, transportation, and technology. In healthcare, regulations focus on data privacy and the ethical use of AI in diagnostics and treatment planning. The finance sector faces scrutiny regarding algorithmic trading and credit scoring to prevent bias and ensure fairness. Meanwhile, the transportation industry must comply with safety regulations for autonomous vehicles. As AI regulations evolve, industries must adapt to ensure compliance while leveraging AI technologies to enhance efficiency and innovation.

References

- Regulation of artificial intelligence

- https://www.brookings.edu/research/a-global-framework-for-ai-governance/

- https://www.europarl.europa.eu/news/en/press-room/20210412IPR01316/ai-regulation-the-eu-has-its-own-approach

- https://www.bbc.com/news/technology-58541311

- https://www.whitehouse.gov/briefing-room/statements-releases/2023/10/09/fact-sheet-ensuring-responsible-ai-development-and-use/

- https://www.oecd.org/going-digital/ai/ai-principles.htm

- https://www.reuters.com/technology/eu-proposes-new-ai-regulation-2022-04-21/

- https://www.nature.com/articles/d41586-021-01382-w

- https://www.weforum.org/agenda/2023/09/how-countries-are-regulating-ai/

- https://www.un.org/en/observances/artificial-intelligence-day