AI detects and blocks malicious video content online through advanced algorithms that analyze video data for harmful elements. By utilizing techniques such as machine learning and computer vision, AI systems can identify and filter out inappropriate content, ensuring a safer viewing experience for users. This proactive approach is crucial as malicious content can range from hate speech to misinformation, posing risks not only to individuals but also to the integrity of platforms. In this article, we will explore how AI technology works in this context and its implications for online safety.

Understanding Malicious Video Content

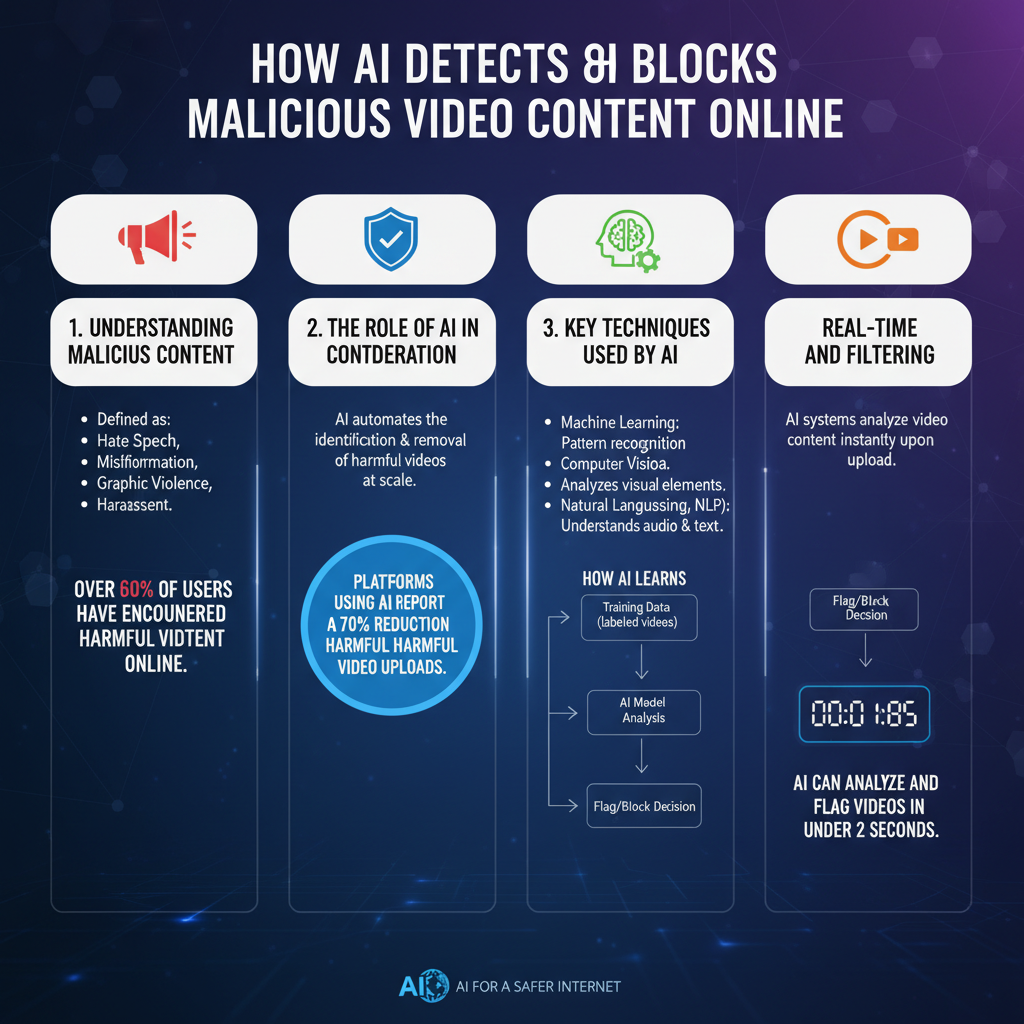

Malicious video content encompasses a wide array of harmful elements that can affect viewers and communities. At its core, malicious video content includes anything that promotes hate speech, spreads misinformation, incites violence, or exploits vulnerable individuals. For instance, videos that perpetuate conspiracy theories or misrepresent facts can mislead viewers and contribute to societal polarization. Hate speech videos often target specific groups and can lead to real-world consequences, including violence and discrimination.

The impact of such content is profound. It can create toxic online environments, diminish user trust, and lead to harmful offline behaviors. For platforms, hosting this type of content can result in reputational damage, loss of user engagement, and even legal consequences. Therefore, understanding the nature of malicious video content is crucial for AI systems designed to combat it.

The Role of AI in Content Moderation

AI plays a transformative role in enhancing traditional content moderation methods. Historically, platforms relied heavily on human moderators to sift through videos, which is both time-consuming and prone to oversight. With AI integration, moderation has become faster and more efficient, allowing platforms to analyze vast amounts of content in real-time.

One of the primary benefits of using AI in content moderation is scalability. For example, platforms like YouTube and Facebook receive millions of uploads every day. AI can quickly assess these videos against community standards and flag potentially harmful content for further review, enabling a more responsive approach to content management. Moreover, AI systems continuously learn from user interactions and feedback, refining their algorithms to improve accuracy over time.

Key Techniques Used by AI

To effectively identify malicious video content, AI employs several sophisticated techniques. One of the most significant is machine learning, where algorithms are trained on vast datasets containing examples of both acceptable and unacceptable content. This training process allows AI systems to detect harmful patterns and make informed decisions about new uploads. For instance, if a particular video includes hate speech detected in previous flagged videos, the AI can quickly identify it as potentially harmful.

Another crucial component is computer vision technology, which analyzes visual elements within videos. This technology can assess facial expressions, gestures, and even the context of scenes to determine if they are appropriate. Consider a video that features graphic violence or explicit imagery; computer vision can detect these elements and flag the content before it reaches viewers.

Real-time Detection and Filtering

One of the most impressive capabilities of AI systems is their ability to operate in real-time. When a user uploads a video, AI algorithms immediately begin analyzing the content, scanning for harmful elements and patterns. This swift detection is crucial in preventing malicious videos from gaining traction and reaching a wider audience.

Once a video is flagged, it enters a review process. Some platforms utilize a combination of AI and human moderators to ensure accuracy. For example, while AI can quickly identify a video that contains hate speech, human moderators can provide context, ensuring that nuances are not overlooked. This collaboration enhances the overall effectiveness of content moderation and helps maintain a safe online environment.

Challenges in AI Detection

Despite the advancements in AI technology, challenges remain in the detection of malicious video content. One significant hurdle is the occurrence of false positives and negatives. A false positive occurs when a benign video is incorrectly flagged as harmful, which can frustrate creators and stifle free expression. Conversely, a false negative happens when malicious content slips through the cracks, posing risks to viewers.

Moreover, ethical considerations surrounding AI algorithms cannot be ignored. Bias in AI systems can stem from the datasets they are trained on, potentially leading to disproportionate targeting of specific groups or ideas. For instance, if an AI system is trained predominantly on content from one culture or perspective, it may misinterpret or overlook harmful elements in content from other backgrounds. To mitigate these issues, ongoing training and diverse datasets are essential.

Future of AI in Video Content Moderation

The future of AI in video content moderation is bright, with several innovations on the horizon that could enhance detection capabilities. For instance, advancements in natural language processing (NLP) could lead to better understanding and context analysis of spoken or written content within videos. This could enable AI to differentiate between discussions of sensitive topics and harmful rhetoric more effectively.

Additionally, continuous learning and adaptation in AI systems are vital to combat evolving threats. As malicious actors develop new tactics to bypass detection, AI must evolve to recognize these changes. Implementing feedback loops where human moderators can provide insights on flagged content could further refine AI algorithms, enhancing their efficacy over time.

AI technology plays a critical role in maintaining the integrity of online video content by detecting and blocking malicious material. By combining various techniques and constantly evolving, AI systems are becoming increasingly effective in protecting users from harmful content. For anyone interested in how technology safeguards our online environments, understanding these processes is essential. As we move forward, collaboration between AI and human moderators will be key in creating a safer digital landscape for everyone.

Frequently Asked Questions

What technologies do AI systems use to detect malicious video content online?

AI systems utilize a combination of machine learning algorithms, computer vision, and natural language processing to identify malicious video content. These technologies analyze visual and audio elements, detect harmful keywords or phrases in video descriptions and titles, and recognize patterns associated with previously flagged content. By continuously learning from new data, AI can adapt its detection methods to provide more accurate and timely responses.

How does AI differentiate between harmful and harmless video content?

AI differentiates between harmful and harmless video content by using trained models that categorize videos based on specific criteria. These models assess various factors such as the context of the video, user interactions, and metadata. For instance, an AI might flag a video for containing hate speech or graphic violence by analyzing speech patterns and visual cues, while allowing benign content to pass through based on established norms and community guidelines.

Why is it important to use AI for blocking malicious video content?

Using AI to block malicious video content is crucial for maintaining a safe online environment for users, especially for platforms that host user-generated content. AI can process vast amounts of video data in real-time, which is essential for identifying and removing harmful material quickly—far beyond the capacity of human moderators. This proactive approach helps prevent the spread of misinformation, hate speech, and other dangerous content, thereby fostering a healthier digital community.

Which platforms are currently using AI to monitor and block malicious videos?

Major platforms such as YouTube, Facebook, and TikTok leverage AI technologies to monitor and block malicious videos. These services utilize advanced algorithms to scan uploaded content for policy violations and harmful behavior. Additionally, many news and streaming platforms are adopting similar technologies to ensure that their content remains safe and adheres to community standards, thereby enhancing user trust and engagement.

What are the best practices for users to report malicious video content effectively?

To report malicious video content effectively, users should utilize the reporting features provided by the platform, providing clear descriptions and specific timestamps of the offensive material. Including relevant context, such as why the content is harmful or violates community guidelines, can significantly aid AI systems in processing the report. Additionally, users should stay informed about the platform’s reporting policies and guidelines to ensure their reports are accurate and actionable.

References

- Deepfake

- https://www.bbc.com/news/technology-56615336

- https://www.nytimes.com/2021/04/07/technology/deepfakes-ai.html

- https://www.nist.gov/news-events/news/2021/06/nist-research-aims-help-detect-deepfake-videos

- https://www.reuters.com/technology/deepfake-technology-poses-risks-beyond-fake-videos-2021-11-09/

- https://www.sciencedirect.com/science/article/abs/pii/S0957417421002056

- https://www.wired.com/story/deepfakes-ai-detect/

- https://www.ojp.gov/ncjrs/virtual-library/abstracts/technology-and-violent-extremism-digital-tools-responding

- https://www.cmu.edu/news/stories/archives/2022/march/deepfakes-research.html